The Assembly Line in the Sky

The True Costs of Progress and the Need to Scale by Imagination, Not Extraction

The dormitory window was four stories above concrete when Tian Yu jumped.

It was March 17, 2010. She was 17 years old. Exactly 37 days earlier, she had stepped into Foxconn City, Shenzhen’s sprawling factory complex where millions of iPhones were assembled. She had left her village to pursue skills and wages, seduced by a line in the employee handbook: “Your dreams extend from here until tomorrow.”

Reality was different. She found instead robotic repetition. Three iPhone screens were polished every minute, totaling 1,700 a day. Errors were punished by public humiliation and reinforced by self-criticism.

Her first month’s pay was lost to “administrative errors.” Company dorms were crammed with strangers on shifting schedules, erasing any chance of her making friends. It’s “a massive place of strangers,” she would later call it.

Her final shift ended at 2 a.m. At dawn, she opened her dorm window and stepped out.

She survived the fall. But it left her paralyzed from the waist down.

From Damage Control to Absolution

Apple’s first reaction was deflection. “Foxconn is not a sweatshop,” Steve Jobs said to a conference. “For a factory, it’s pretty nice.”

Tim Cook, then COO and master of Apple’s supply chain, responded to the controversy with an internal email, in which he declared that any suggestion of Apple not caring about its workers was “patently false and offensive.”

But headlines continued to pile up. The story wouldn’t go away. Protesters staged mock funerals outside Apple Stores. Eventually Apple joined the Fair Labor Association and opened its suppliers, including Foxconn, to independent audits.

The findings were damning:

Workweeks routinely exceeding China’s legal 49-hour limit.

Seven straight workdays without rest

Wages that were insufficient to cover basic living needs

Nearly half of workers witnessing on-the-job accidents.

Cook had no choice but to press for sweeping changes. Foxconn made thousands of new hires to cut back hours, upgraded worker housing and safety, and paid overtime in full.

Apple, for its part, began conducting surprise inspections. In 2024 alone, Apple performed 893 supplier assessments, 22% of which were unannounced. The message? We’ll show up anytime, anywhere.

Such vigilance has become standard today, but only on one condition: the human suffering must be visible. The moment consumers ask, “Who made my iPhone?”, companies cannot look away. Regulators stand ready to pounce. Watchdogs watch.

And that’s the lesson facing AI’s industrial future.

The hidden cost of progress will remain hidden only until someone, somewhere, decides to look.

The Cloud's Hidden Factory: Servers, Sweat, and Sorrow

A smartphone is tangible. So is a T-shirt from H&M, or a pair of sneakers from Temu. We can picture a factory floor along with its machinery and rows of workers.

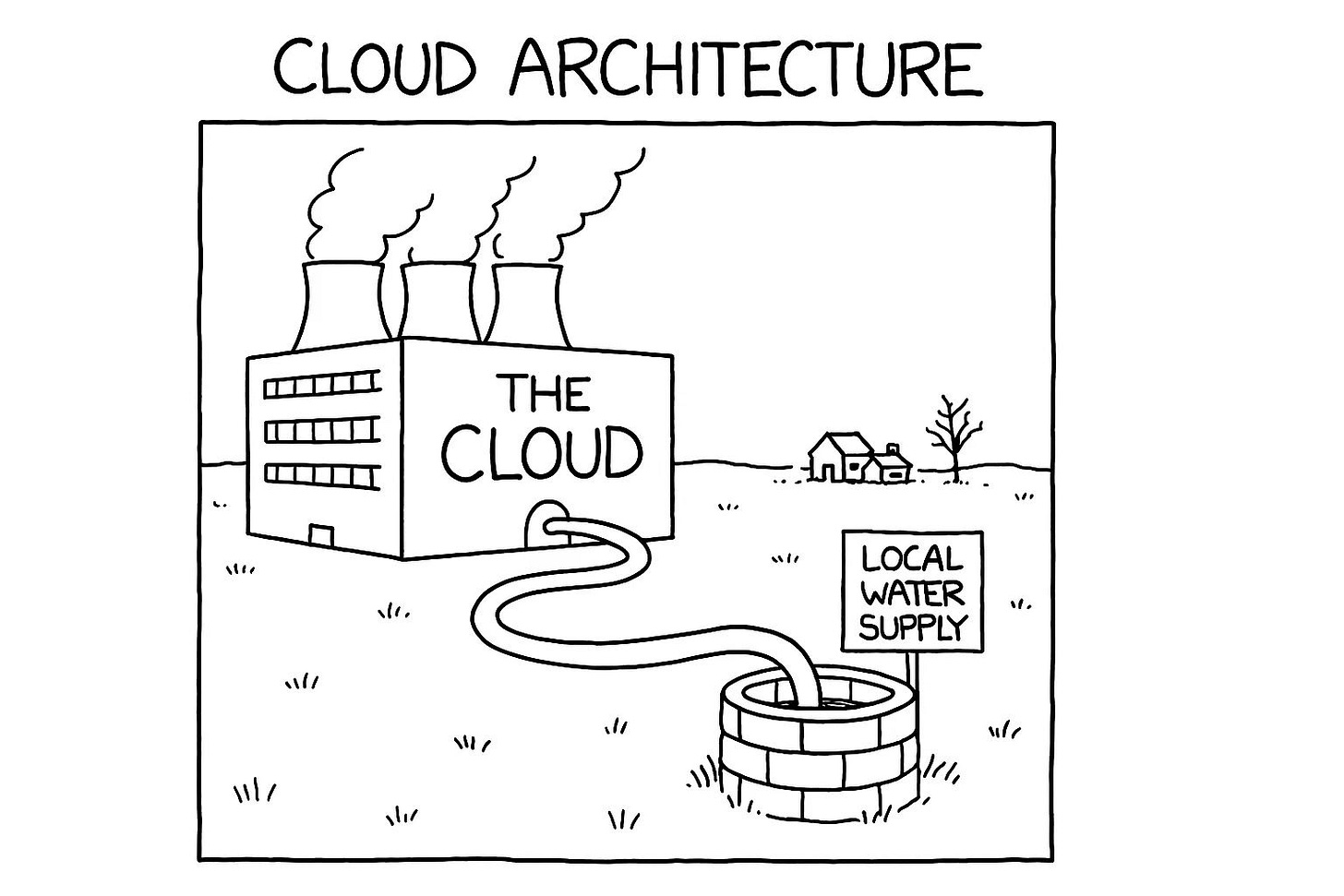

But with AI, everything dissolves into abstraction. The moment we began calling remote servers “the cloud,” we allowed ourselves to forget their physical reality. There is nothing in the sky. Servers sit firmly on the ground, residing in massive, windowless warehouses filled with a constant mechanical whir.

Step inside one of these data centers, you’ll feel it: a hot gust behind a rack of machines that proves how much heat all those whirring servers produce. Technicians know the constant danger of a “thermal runaway event,” a cascading failure where cooling systems falter and heat multiplies on itself.

In seconds, rows of blinking green and blue lights can shift into a foreboding red. When that happens, the air thickens, growing hotter and hotter, threatening to melt the machinery.

Then, with a roar, massive air conditioning units flood the room with an arctic chill, restoring order.

This is the physical, thermodynamic reality of "the cloud."

Until recently, I carried the naïve picture that generative AI was simply the handiwork of a few brilliant coders in California, typing away until, voilà, the latest and most powerful large language model (LLM) appeared.

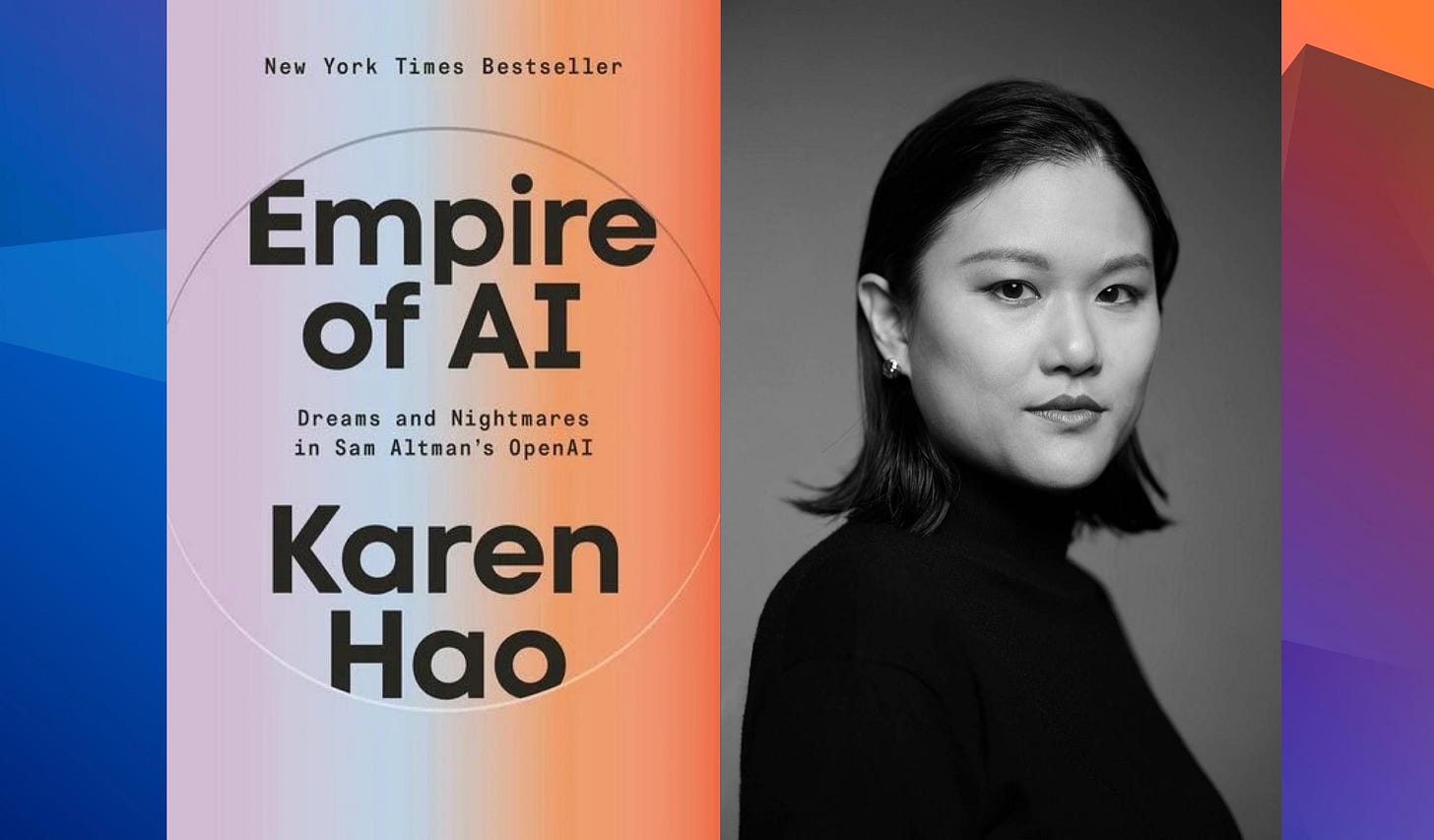

Then I read Karen Hao’s Empire of AI. Hao, a leading AI journalist and Wall Street Journal tech correspondent, brings years of reporting across Silicon Valley, China, and Africa. Machine learning, as it turns out, is profoundly labor-intensive. Before an AI model can appear fluent and clever, it must be trained.

Remember how the early versions of ChatGPT could be coaxed into producing graphic violence or extremist hate speech? The fix was obvious but grueling: Sanitize the data before the AI learned from it. The process involved hiring humans to manually label and filter toxic content.

The factory line never disappeared. It just went invisible.

The New Foxconn

In 2021, OpenAI quietly outsourced this work to Africa. Of course, it wasn’t something that just anyone could do. Workers needed solid English skills and enough education to follow nuanced guidelines. They also had to be economically desperate enough to do the work, in countries where government oversight was weak and speed was everything.

In Nairobi, Kenya’s bustling capital, young graduates in math and computer science sat before glowing screens, day after day, peering into the internet’s darkest corners. Hired through the contractor Sama, they were tasked with filtering and labeling violent, disturbing content to make the world’s AI appear “safe.” It was invisible work on a digital assembly line. And what were they paid? Between $1.46 and $3.74 an hour, just enough to scrape by in a city where comfort was out of reach.

The psychological toll was crushing. Nightmares, anxiety attacks, and depression were common. Counselors were technically on call, but often ill-equipped to handle trauma of this magnitude.

Mophat Okinyi, one of these workers, remembered sifting through 700 horrific text passages a day. Eventually, the images leaked into waking life. He grew paranoid in public and emotionally numb at home.

“I’m very proud that I participated in that project to make ChatGPT safe,” he told Karen Hao. “But now I always ask myself: Was my input worth what I received in return?” His pregnant wife left him, saying he was “a changed man.”

He said simply, “It has destroyed me completely.”

The Invisible Workforce

From the early self-driving car boom to today’s expert systems, humans have always been involved. But now, data-annotation firms have become the Foxconn of the digital age. These contractors make our “smart” digital lives possible, yet they operate almost entirely out of sight.

One billion-dollar player is Scale AI, founded in 2016 by MIT dropout Alexandr Wang. Hao recalls asking a former manager, who had overseen the company’s workforce expansion, how Scale had landed clients like Lyft, Apple, Toyota, and Airbnb. The mandate was simple: “Get the best people for the cheapest amount possible.”

That philosophy drove Scale to recruit in Kenya and the Philippines. More recently, Scale has tapped Venezuela, where economic collapse has left a highly educated population desperate for work. As AI’s language needs expanded, the same playbook applied: Recruiting French speakers from former French colonies in Africa, Mandarin speakers from the Chinese diaspora in Southeast Asia, and so on.

I realized that this is no cottage industry; it’s an industrial complex. It’s always the same invisible assembly line, just with different faces. As of June 2025, Scale AI is valued at more than $29 billion. And Wang, the company’s founder, has become one of the youngest self-made billionaires in Silicon Valley’s history.

The Thirsty Leviathan

In an interview with Bloomberg, Wang reduced AI’s empire to three pillars: data, compute, and algorithms.

Data labeling propelled Scale AI to prominence. Compute sent Nvidia’s valuation into the stratosphere and is also the engine behind Stargate, the $500 billion mega-project from OpenAI, Oracle, and SoftBank. It underpins Meta’s ambition to build a data center the size of Manhattan.

And just as the human labor behind AI remains hidden, so too does its hunger for physical resources. The footprint is not only human. It is geological.

A single 100-megawatt AI data center gulps 2 million liters of water every day just to stay cool. That’s the daily water supply for 6,500 homes.

In Arizona’s 117-degree heat, this thirst takes grotesque form. Technicians patch up evaporative cooling systems nicknamed The Mouth: Pipes push sediment-rich Colorado River water into honeycomb filters, where it congeals into “oozy soot” before vanishing into desert air.

Cooling alone can devour more than 40% of a facility’s total electricity.

Meanwhile, nearby communities battle drought, and politicians denounce “inessential” water use.

According to a recent survey, 64% of professionals feel overwhelmed by how quickly work is changing, and 68% are looking for more support to keep up. If you enjoyed this article and it brought you clarity, could I ask a quick favor?

Subscribe now. It’s free and takes just seconds to sign up.

You’ll join thousands of other ambitious managers and CEOs receiving exclusive, research-backed insights delivered straight to their inboxes. Let’s keep you one inch ahead.

In the past three years alone, more than 160 new AI data centers have risen across U.S. counties locked in a quiet competition for scarce water. The locations of these counties are predictable: Arizona, West Texas, and Southern Virginia. Cheap land. Abundant wind and solar. Generous tax breaks.

In this corporate calculus, water is just another input on the balance sheet. It’s something that you can buy until, one day, the well runs dry.

Chile learned this lesson early. In 2022, Google planned for a $200 million data center in Santiago. It set off protests so fierce that authorities temporarily revoked Google’s permit. Residents could not fathom why their dwindling aquifers should cool YouTube’s servers instead of irrigating farmland.

That same unease is now flaring in the United States. From Mesa to Tucson to Chandler, residents have staged protests against the endless low hum of server halls and the voracious pull on their water supplies.

For David Gray, a Chicago software engineer, the inescapable drone from a nearby data center became “a constant, undesired companion” during Covid lockdowns. The noise was so relentless that it eventually became a source of hypertension and anxiety requiring clinical therapy.

But it doesn’t have to be this way. However, the solution won’t come from engineering alone.

It will take collective re-imagination.

Reimagining the Beast

When Microsoft announced plans for a massive data center in Quilicura, Chile, resistance was swift. But something else happened too. Activists, architects, and students began to ask, “What if this box of servers could live with the community, not apart from it?”

Instead of a fortress of concrete and fences, they imagined something porous. In one design, the facility’s cooling reservoirs became public pools, with every drop visible and shared.

Another sketch featured a “fluid data territory” where raised walkways wound through marshes, with server halls hidden among native plant nurseries. In this vision, the machines themselves would help purify marsh water, sending a clean supply back to the aquifer.

Those outside voices, from residents to students to environmentalists, brought ideas that most software engineers would never have considered. Even if the proposals remain nudges rather than blueprints, they reveal a larger truth: AI cannot scale by extraction alone.

It must be scaled by re-imagination.

Beyond So-So Automation: Reimagining AI's Future

What stands between our present reality and a better one is not technical limitation. Rather, it’s our unwillingness to reimagine, often reinforced by our own fear. We tell ourselves stories in order to let AI automate everything. And that’s a myth.

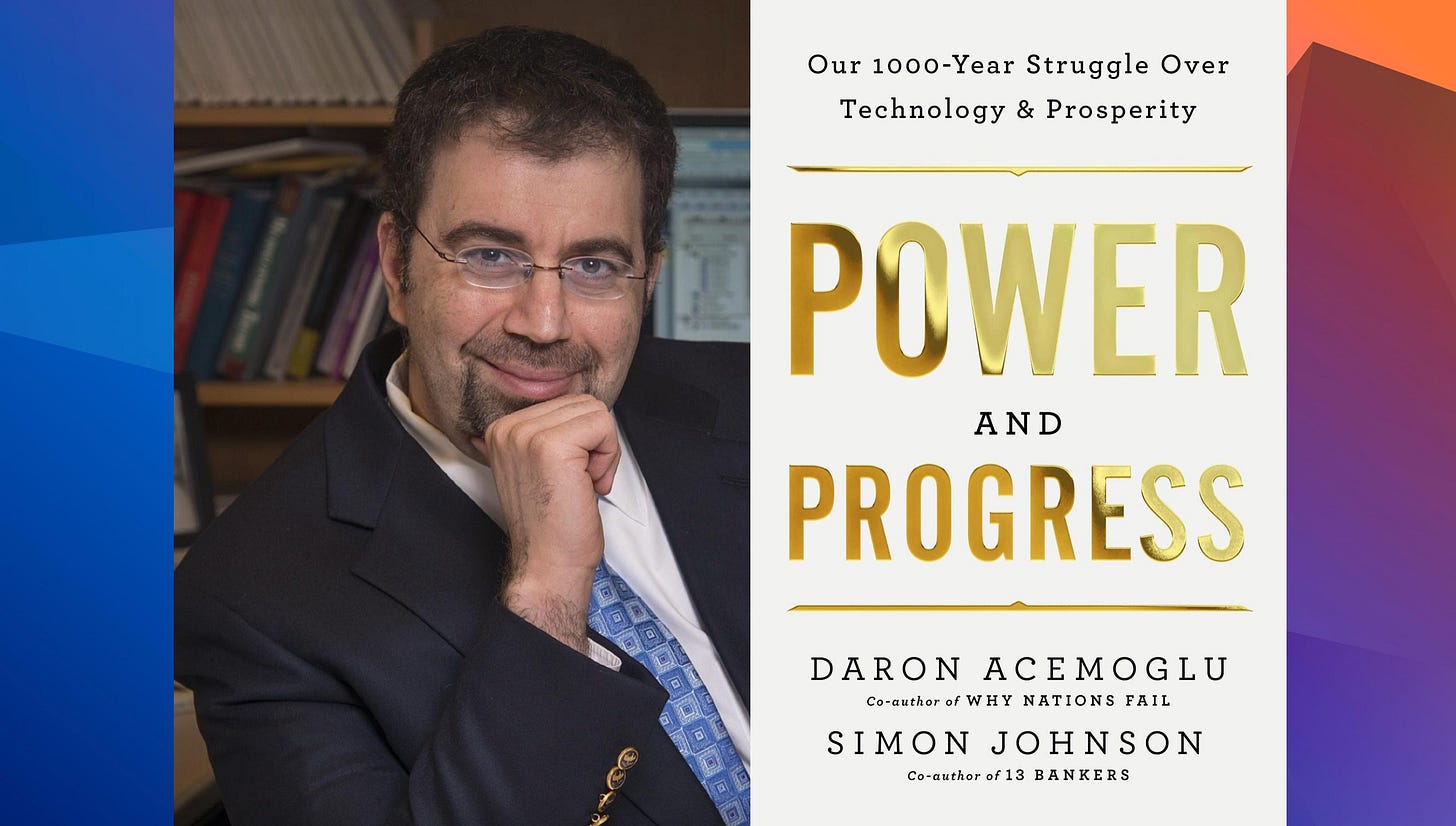

Behind every seemingly inexorable technological advance lie human choices about where and how it should be deployed. Nobel Prize–winning economist Daron Acemoglu and MIT’s Simon Johnson have warned of the persistent temptation for companies to pursue “so-so automation,” which involves replacing workers for the sake of automation itself, with little benefit to customers or productivity.

In Power and Progress, they use the self-checkout as a vivid example. The task is simply offloaded to the customer, cashier jobs vanish, and the improvement in satisfaction is negligible. The point that Acemoglu and Johnson drive home is this: Technological progress is rarely deterministic. We choose the path.

Amazon’s “Go” stores — built on computer vision, remote monitoring, and mobile apps so that customers can “just walk out” — illustrate the point. The technology works, mostly. But the benefit is marginal, the infrastructure cost immense, and the main outcome is nothing more than job displacement.

The same is true for AI’s trajectory. No law of nature says we must scale the way we do now. We could invent new cooling systems, develop cleaner energy sources, or design safer, more dignified ways for humans to participate in data labeling.

Yet a political narrative is taking hold: If we don’t scale as fast and as big as possible, China will overtake us. It’s a narrative that strongly appeals to Silicon Valley and one that happens to justify its current methods, unthinkingly.

Beyond Bigger Models: The Limits of Scale

To be sure, even if you buy the premise of strategic rivalry, scaling blindly is a dead end. The underwhelming launch of OpenAI’s much-hyped GPT-5 model illustrates this point. OpenAI’s CEO Sam Altman billed GPT-5 as “a significant step on the path to AGI [Artificial General Intelligence].” But reality did not live up to the promise.

Within days, users saw the new model making basic errors (mislabeling a U.S. map, for example) and delivering only minor improvements over its predecessor. People “expected to discover something totally new” from GPT-5, noted Thomas Wolf of Hugging Face, but he explained that “we didn’t really have that”.

Yann LeCun, Meta’s chief AI scientist and a Turing Award winner, recently declared that he’s “not so interested in LLMs anymore.” He was referring to the fact that simply making large language models bigger yields diminishing returns. “We are not going to get to human-level AI by just doing bigger LLMs,” he argues.

Instead, he champions new research directions that are less data-hungry and less reliant on armies of underpaid human labelers. Joelle Pineau, former head of Meta AI research and now chief AI officer at startup Cohere, echoes this view: “Simply continuing to add compute won’t be enough.”

If we want moonshots or the AGI on which Sam Altman so insists, we need machines that understand the physical world, have persistent memory, and can reason and plan.

In short, we need a painful pivot in the current approach to get us over the fence.

Bending the Arc of Innovation

Progress is rarely linear. And we must reject the fiction that scaling equals progress. Rebecca Solnit writes in Hope in the Dark, “Cause-and-effect assumes history marches forward, but history is not an army. It is a crab scuttling sideways, a drip of soft water wearing away stone, an earthquake breaking centuries of tension.”

What once seemed impossible eventually tips into reality, only because ordinary people kept chipping away at the problem until the breakthrough moment arrived.

I like her theory because it refuses despair: “Your opponents would love you to believe that it’s hopeless… Hope is a gift you don’t have to surrender, a power you don’t have to throw away.” Yes, ChatGPT is now the fifth-most visited website in the world, just behind Instagram and already ahead of X. OpenAI says the system receives a staggering 2.5 billion prompts every day. Its scale feels unstoppable.

And yet, nothing about our future is preordained.

Companies can redesign data labeling. Scientists can invent more efficient cooling. Governments can prioritize dignified labor. Programmers can pioneer new approaches. And Alexandr Wang — Scale’s co-founder and now Meta’s chief AI officer — is just getting started, and he may well rewrite the rules.

That’s how we all have a role to play, if we choose to do so:

If you run a small company, design AI systems with efficiency and transparency that pressure bigger players to improve.

If you’re a non-tech business, ask your vendors the hard questions about sustainability and ethics.

If you’re a consumer or concerned citizen, support policies and products that prioritize responsible AI, and be vocal about it.

Bit by bit, our technology can align with our values, “not by magic, but by the incremental effect of countless acts of courage, love, and commitment.”

From One Tear, a Tide

Tian Yu’s story did not end in vain. The public outcry after her fall sparked global protests against Apple and forced it to confront the human cost of its supply chain. By early 2012, Foxconn announced a 16–25% pay hike, raising the basic wage in Shenzhen to 1,800 yuan a month. That’s well above the city’s minimum. Such changes did not happen overnight, nor did they solve everything. But for the young men and women on Foxconn’s assembly lines, these changes mattered.

The turnaround is a reminder that even the mightiest companies can be moved to change. We bend the arc of innovation through the simple act of making the invisible assembly line visible and demanding better. Each uncomfortable question and each principled choice is a drop of water wearing away stone.

Slowly and relentlessly, we reshape the hidden architecture of AI toward human dignity.

See you again in two weeks. 👋

I spend 25+ hours researching and writing each of these pieces, going through papers and podcasts, talking to experts, and connecting dots that others miss—all so that you don’t have to. If you enjoyed this piece and if it brought you clarity, could I ask a quick favor?

Subscribe now. It’s free and takes just seconds to sign up.

Not only does it help justify the research time, but as our community grows, I can secure even better access to the executives and researchers who shape tomorrow’s business landscape—bringing those insights directly to you.

TL;DR (reader‑shareable)

AI isn’t weightless. It runs on human labor (often hidden and underpaid) and massive physical infrastructure that consumes water and energy. Treating scale as destiny leads to “so‑so automation” and community harm. The alternative is scaling by imagination: new cooling, cleaner power, dignified labeling work, and community‑first design. That shift doesn’t require miracles, just different choices from builders, buyers, and citizens. The arc of innovation bends when we make the invisible visible and insist on better.

Thought-provoking article with important insights! Happy to share an article we posted on the (lack of) options regarding regulating AI energy and water use: https://theconversation.com/regulating-ai-use-could-stop-its-runaway-energy-expansion-258425

TY Howard. Brilliant content - and very appreciated. Subscribed; shared on LinkedIn and FB, and will continue to share your analysis over the next weeks. My brain finds AI content generally boring, lazy and dull. Mindful of the 'cognitive debt' issue, limit my own use of agents. https://www.media.mit.edu/publications/your-brain-on-chatgpt/

A friend, Michal Wroczynski, started an antibullying AI company. https://samurailabs.ai/